Program & faculty evaluation

Introduction

Graduate medical education (GME), also known as residency and fellowship training, is the formal confluence of medical education and care delivery. GME is a critical period in the development of a doctor of medicine. Through successful completion of a GME program, the doctor of medicine evolves from a student to a physician (and surgeon) who is able to practice competently and independently without supervision (1).

GME environment

The health care environment is ever shifting and some argue that the tempo of change is faster than ever. The aging and growing population, exponential growth in medical discovery, increasing reliance on technology, instantaneous communication, and the digitalization of life and health data are pervasive currents that exert pressures on health care delivery systems. GME resides in this environment and must continually adapt to run concurrent with changes in health care. In comparing physicians of a few decades ago to those entering practice today, a stark difference in the necessary skill set is apparent. Today’s physicians are directed to be well equipped to rapidly access medical information, health data, social resources and practice management tools while focusing on issues such as systems thinking, practice improvement, population health, health equity, and inter-professional collaboration and personal resilience and well-being. In order to foster a health care system and learning environment that results in training physicians who provide reliable, high quality, affordable, patient-centered care, GME curricula must adapt along with the forces that evolve health care. This means that educational curricula must continually adjust to a changing health care environment. In order for an educational curriculum to remain relevant today, the training program must repeatedly receive feedback on trainee, faculty and program performance and outcomes and feed forward navigational changes in response to the feedback. Much like a jet continually records and receives feedback from the environment, airplane conditions and pilot behavior to navigate safely and efficiently through a variety of influential elements, GME is increasingly using performance data to ascertain and adjust the trajectory of trainee development with the aim of producing skilled, safe and effective physicians and surgeons who serve the public.

For decades, performance evaluation relied primarily on scrutinizing the individual trainee and driving improvement in their performance primarily through the individual. Although, attention to the performance of the individual trainee is central in the development of a competent physician, our improved understanding of complex systems, such a health care, requires GME programs to scrutinize and assess programmatic and institutional factors that influence the performance of a trainee or cohort of trainees. In recent years, education and training programs have been evolving to adapt to these changes. In order to continually improve GME support and direction from broader systems is often necessary to set standards for GME across educational institutions.

Accreditation council for GME

In the United States, training programs are largely accredited by the Accreditation Council for Graduate Medical Education (ACGME). The ACGME is a not-for-profit organization that sets standards for US GME (residency and fellowship) programs and the institutions that sponsors them. The ACGME renders accreditation decisions based on compliance with specialty specific program requirements. Accreditation is achieved through a voluntary process of evaluation and review based on published accreditation standards and provides assurance to the public that hospitals and training programs meets the quality standards (Institutional and Program Requirements) of the specialty or subspecialty practice. ACGME accreditation is overseen by a review committee made up of volunteer specialty experts from the field that set accreditation standards and provide peer evaluation of hospitals as well as specialty and subspecialty residency/fellowship programs. Accreditation determinations are made based on a review of information about trainee performance as well as the training program and its educational environment. More recently an initiative to accredit program outside the United States is being developed through the international section of the ACGME (iACGME).

Program directors of residency and fellowship programs are responsible not only for determining whether individual trainees have met educational goals but also for ensuring the quality and environment of the training program is conducive for educating the physician. The program director is therefore reliant on information about faculty performance and assessment of the training program environment. Since each training program has specialty and institution specific characteristics, program evaluation must have sufficient rigor to satisfy accreditation requirements yet be flexible and responsive to the uniqueness of individual educational programs (1-5).

The ACGME requires that residents and faculty evaluate the training program at least annually and that the evaluation results be used at the Annual Program Review (APR) meeting to assess the program’s performance and set future goals. Typically, each institution has a GME committee that also reviews programs within their institution as for method for identifying programs requiring aid or those demonstrating exemplary practices.

Program evaluation

Program evaluation is a systematic collection of information about a broad range of topics for use by specific people for a variety of purposes. In the case of a GME, program evaluation aims to assess how well a residency or fellowship program is educating doctors to become competent physicians (and surgeons) who are able to practice independently and without supervision. Each program evaluation system has a collection of major stakeholders. In the setting of GME, the major stakeholders are patients, trainees, faculty, institution, and public well-being. Presently, all GME programs systematically collect an array of quantitative and qualitative information regarding, trainee performance, their graduate performance to national standards, educational experiences, faculty teaching and mentoring, curriculum oversight and reviews, and institutional resources. All data collection, interpretation and implementation of adjustments must be directed towards improving the experiences of the stakeholders. Program evaluation in GME engenders goals such as determining how to improve the educational program; identifying what needs replacement, refinement, or elimination; constructing steps to implement change and; and measuring outcomes and reassessing effectiveness (1-5).

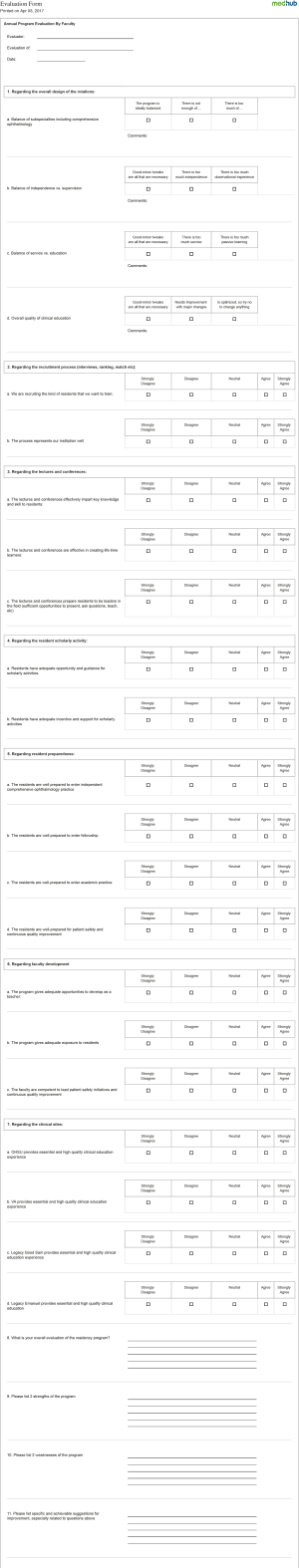

As noted above, all training programs have unique attributes; therefore evaluations must be tailored to each environment so that areas specific to the training program are addressed. Although common inquiry points are present with program evaluation, a universal program evaluation form cannot be applied to all programs and remain pertinent. In general, program evaluations should align with the overall goals and objectives of the program. Each program will consider which of its objectives and outcomes would be useful to evaluate or measure. Some of the educational areas to be surveyed include didactic experiences, clinical rotations, training sites, procedural or surgical experiences as well as the access to educational resources and a clinical realm conducive to delivery effective care. In addition, assessment of faculty engagement in education, opportunity for scholarly activity, institutional support and availability of the program director and coordinator or other educational leaders are important. Typically, both trainees and faculty are surveyed to express their view of the educational program. Program evaluation should allow for collection of open-ended responses about program strengths, areas of development and deficiencies. Figure S1: Casey Eye Institute—Oregon Health & Science University Program Evaluation form delivered through electronic resident management system (MedHub: http://www.medhub.com/). Results are collated and anonymized.

In an effort to derive meaningful feedback, the program must emphasize the confidential nature of the responses. The program must also assure the anonymity of the respondents. Currently, programs use web-based resident management systems to deliver surveys. The resident management systems are designed to anonymize respondents (particularly when they are trainees), collate responses, and generate reports that represent the collective trainee and faculty collective sentiment about the educational experience at a training program and serves as a feedback to the program leadership regarding the educational environment.

Faculty evaluation

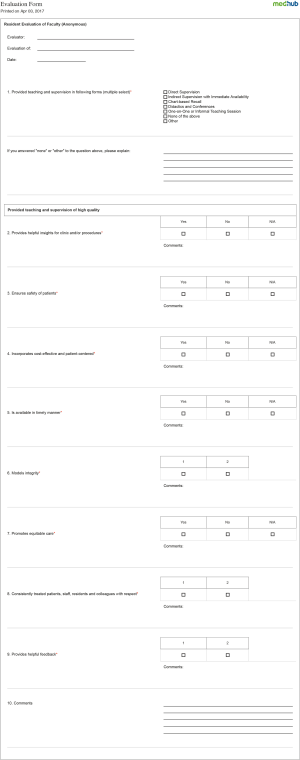

Evaluation of faculty performance has tremendous influence on the educational environment of a training program. Faculty evaluations assist the faculty and program administration to identify and encourage excellence in education by faculty. Faculty evaluations help in not only developing trainees but also developing faculty to become effective educators. A positive approach to faculty evaluation is essential. Evaluation reports that focus on areas of strengths and areas for improvement can provide a basis for the long-term development of effective educators, help in their promotion and tenure as well as career fulfillment. Typically, the dean, department chair, division chief and/or promotion and tenure committee have the responsibility for oversight of this process. Evaluation should determine the extent to which the work of an individual faculty member contributes to the mission of the academic institution and the assigned unit. Therefore, faculty evaluation should be should be based on an explicit statement of expectations within the department or program much like goals and objectives are important in resident and fellowship training programs. Faculty evaluation specific to educational activities should be incorporated within the institutions web-based resident management system in order to ensure confidentiality and anonymity of respondents and derive allow for collation of date and generation of reports. Figure S2: Casey Eye Institute—Oregon Health & Science University Faculty Evaluation from delivered through electronic resident management system (MedHub: http://www.medhub.com/). The form is completed by trainees, there respondents are masked, the results are collated.

ACGME resident/fellow and faculty surveys

In addition to individual programs delivering their own evaluation forms to trainees and faculty, annually the ACGME delivers both a resident/fellow survey and a faculty survey to all residents and core faculty at accredited training institutions across the country. The ACGME’s resident/fellow and faculty surveys are an additional methods used to monitor graduate medical clinical education and provide early warning of potential non-compliance with ACGME accreditation standards. All specialty and subspecialty programs (regardless of size) are required to participate in these surveys each academic year. When programs meet the required compliance rates for each survey, reports are provided that aggregate their survey data to provide an anonymous and comparative look at how that program compares against national, institutional, and specialty averages (6).

ACGME resident/fellow survey

All ACGME-accredited specialty and subspecialty programs with active residents (regardless of program size) are surveyed each academic year. The ACGME Resident/Fellow survey contains questions about the clinical and educational experiences within the trainees’ program (7). The survey is only completed by residents/fellow. Program administration does not have access to the survey or to any individual resident or fellow’s responses. When at least 70% of a program’s residents/fellows have completed the survey or at least four residents/fellows have been scheduled, reports are made available to the program annually. For those programs with fewer than four residents/fellows scheduled for the survey who meet the 70% compliance rate, reports are only be available on a multi-year basis after at least 3 years of survey reporting, but may contain up to 4 years of data. The ACGME Resident/Fellow Survey Content Areas are listed in Figure 1 (7).

ACGME faculty survey

The ACGME requires faculty members to complete annually an online survey that contains questions about faculty members’ experiences working within their program, as well as their interactions with the residents/fellows training. The survey is only to be completed by identified faculty members (6,8). The program administration does not have access to the survey or to any responses provided by individual faculty members. When at least 60% of a program’s faculty members have completed the survey and at least three faculty members have responded. Programs with fewer than three faculty members participating in the survey should reach a 100% response rate. Review Committees closely monitor programs’ response rates and review programs that fail to meet this requirement. Reports are made available to the training program annually. The ACGME Faculty Survey Content Areas are listed in Figure 2 (8).

The Program Evaluation Committee (PEC)

In past years, carrying out the necessary changes to improve the educational program was commonly a practice solely for the program director. In recent years, GME programs have increasingly relied on PEC to actively participate in making the necessary changes to the educational environment. Presently, the ACGME has established guidelines for the PEC and the presence of a PEC is a requirement for accredited GME programs (1-5,9).

The goal of the PEC is to annually make navigational changes to improve the educational. Current ACGME guidelines indicate that the program director appoints the PEC members. The group may be comprised of the entire faculty but more often it is made up of a small group of faculty and/or associate program directors. At minimum, the PEC must be composed of two faculty members. The program director may be one of those two faculty members. At least one trainee must be a PEC member. In smaller programs or in programs where no trainee is enrolled in a particular year, the PEC may not include a trainee. The absence of any actively enrolled trainee is the only instance when the absence of the trainee PEC member acceptable. Because of the many configurations of programs and support structures, there are no requirements on how the PEC is to carry out its duties. Each program is free to develop a meeting schedule or assign responsibilities as it sees best. Other than the program director appointing members, the relationship between the program director and the PEC is for each program to decide. Some PECs may be active all year long, while others may rely on the program director to implement improvements.

A written description of the committee and its member responsibilities must be available and understood to participants. An essential role of the PEC is to participate actively in efforts to improve the educational curriculum. A key aspect of the PEC is not to track individual trainee performance. This key aspect differentiates the PEC from the Clinical Competency Committee (CCC) whose role is to track individual trainee’s performance vis-à-vis training specific milestones.

The PEC actively participates in developing, implementing, and evaluating educational activities of the program; reviewing and making recommendations for revision of competency-based curriculum goals and objectives; addressing areas of non-compliance with ACGME standards; and reviewing the program annually using evaluations of the program, faculty, and the trainees. Although the PEC is required to meet at least annually, it may certainly meet more often. The PEC also monitors aggregate trainee performance, faculty development, graduate performance on the certification examinations and program quality. In order to ascertain program quality, trainees and faculty must have the opportunity to evaluate the program confidentially and in writing at least annually and the program must use the results of residents’ and faculty members’ assessments of the program together with other program evaluation results to improve the program. Although the PEC has the responsibility is to address areas of non-compliance with minimum ACGME standards, the PEC is encouraged to improve and innovate the program curriculum to go beyond the minimum.

APR

After reviewing the program, the PEC prepares a written summary of the PEC’s findings and conclusions and a plan of action to document initiatives to improve performance. The PEC will delineate how activities will be measured and monitored. The synthesis of findings, recommendations for change and implementation of action plans are presented as an annual program evaluation (APE) document. Such a document tracks ongoing improvements of the program and helps to serve as a navigational plan and a verification of progress improvement. The action plan is reviewed and approved by the teaching faculty during and APR to ensure there is widespread agreement and support and is documented in meeting minutes. While APE does not have to be submitted to the ACGME each year, it is customary for the training program’s office of GME will expect an APE report from the APR.

For instances, where the PEC is unable to implement changes on their own, working with the institutional GME committee, department chair, and/or designated institutional official (DIO) may be necessary. A common phenomenon with committee dynamic is that while improvements are suggested, they are not necessarily always implemented. The reasons for lack of implementation are diverse in a group activity; however, a key responsibility of the PEC is to ensure that momentum toward improvement in education continues. The PEC should keep a record of its decisions; including what suggested improvements should be explored. Practical limitations or inadequate resources may limit implementation of innovative ideas. For those areas where there is a decision for a change, there should be a plan to make sure the result was positive. Simply asking the trainees and faculty members might be sufficient; but it might be as complex as measuring the impact of the change on patient care outcomes. This information should be included in the APE, which is then used by the program to identify areas for improvement and track the efforts of the program to effect changes. The PEC must maintain that suggestions for improvement are not forgotten even though suggestions for program improvement may require several years to accomplish.

Conclusions

GME has shifted its curricula from process-oriented approach to outcomes-oriented models. Program and faculty evaluation are methods by which educational curricula may adjust the teaching and learning environment to meet the needs and fills the gaps in GME. The measurement of educational outcomes is an essential for assessing teaching effectiveness in a shifting health care environment. In addition to trainee, program, and faculty evaluations, APR and evaluation and navigational changes made by the program education committee are essential to maintain effectiveness of an educational curriculum in a contemporary graduate medical training program.

Acknowledgments

Funding: This work has received Unrestricted Grant Research to Prevent Blindness, New York, USA and Casey NIH Core grant (P30 EY010572), Bethesda, Maryland, USA.

Footnote

Provenance and Peer Review: This article was commissioned by the Guest Editors (Karl C. Golnik, Dan Liang and Danying Zheng) for the series “Medical Education for Ophthalmology Training” published in Annals of Eye Science. The article has undergone external peer review.

Conflicts of Interest: The author has completed the ICMJE uniform disclosure form (available at http://dx.doi.org/10.21037/aes.2017.06.02). The series “Medical Education for Ophthalmology Training” was commissioned by the editorial office without any funding or sponsorship. The author has no other conflicts of interest to declare.

Ethical Statement: The author is accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

Open Access Statement: This is an Open Access article distributed in accordance with the Creative Commons Attribution-NonCommercial-NoDerivs 4.0 International License (CC BY-NC-ND 4.0), which permits the non-commercial replication and distribution of the article with the strict proviso that no changes or edits are made and the original work is properly cited (including links to both the formal publication through the relevant DOI and the license). See: https://creativecommons.org/licenses/by-nc-nd/4.0/.

References

- Evaluation. Available online: http://www.acgme.org/Portals/0/PDFs/commonguide/VA2_Evaluation_ResidentSummativeEval_Explanation.pdf

- Musick DW. A conceptual model for program evaluation in graduate medical education. Acad Med 2006;81:759-65. [Crossref] [PubMed]

- Thibault GE. The Importance of an Environment Conducive to Education. J Grad Med Educ 2016;8:134-5. [Crossref] [PubMed]

- Lypson ML, Prince ME, Kasten SJ, et al. Optimizing the post-graduate institutional program evaluation process. BMC Med Educ 2016;16:65. [Crossref] [PubMed]

- Durning SJ, Hemmer P, Pangaro LN. The structure of program evaluation: an approach for evaluating a course, clerkship, or components of a residency or fellowship training program. Teach Learn Med 2007;19:308-18. [Crossref] [PubMed]

- Accreditation Council for Graduate Medical Education. Resident/Fellow and Faculty Surveys. Available online: http://www.acgme.org/Data-Collection-Systems/Resident-Fellow-and-Faculty-Surveys

- Accreditation Council for Graduate Medical Education. ACGME Resident Survey Content Areas. Available online: http://www.acgme.org/Portals/0/ResidentSurvey_ContentAreas.pdf

- ACGME Faculty Survey Question Content Areas. Accreditation Council for Graduate Medical Education. http://www.acgme.org/Portals/0/ACGME%20FacultySurvey%20QuestionContentAreas.pdf

- Association of American Medical Colleges. Optimizing Graduate Medical Education—A Five-Year Road Map for America’s Medical Schools, Teaching Hospitals and Health Systems. Available online: https://www.aamc.org/download/425468/data/optimizinggmereport.pdf

Cite this article as: Lauer AK. Program & faculty evaluation. Ann Eye Sci 2017;2:44.